How to tell a bike from a Tyrannosaurus Rex

At its Cloud Next 2017 Conference in San Francisco, Google unveiled a godsend to companies that produce large amounts of video content. The Redwood Giant demonstrated a video content analysis API that can be used on files stored in Google Cloud Storage. The API automatically associates a rich taxonomy to videos, making the content of the videos searchable with conventional search engines.

Image recognition tools already exist, such as the facial recognition tools available in various photo management applications. But what’s special about the Google tool is that it automatically analyzes any digital video. Until recently, videos were only searchable with the metadata associated to them manually by human beings, since videos are nothing more than an undecipherable mass of bits for text-based search tools. Yet who hasn’t cursed while searching for a poorly indexed video on YouTube (for example) because the keywords were wrong or missing? The advent of audio recognition was a step forward in the machine learning process; video recognition is the final step.

How it works

Google’s tool, temporarily called Cloud Video Intelligence, is currently only available in private beta mode to paying clients of Google’s Cloud platform.

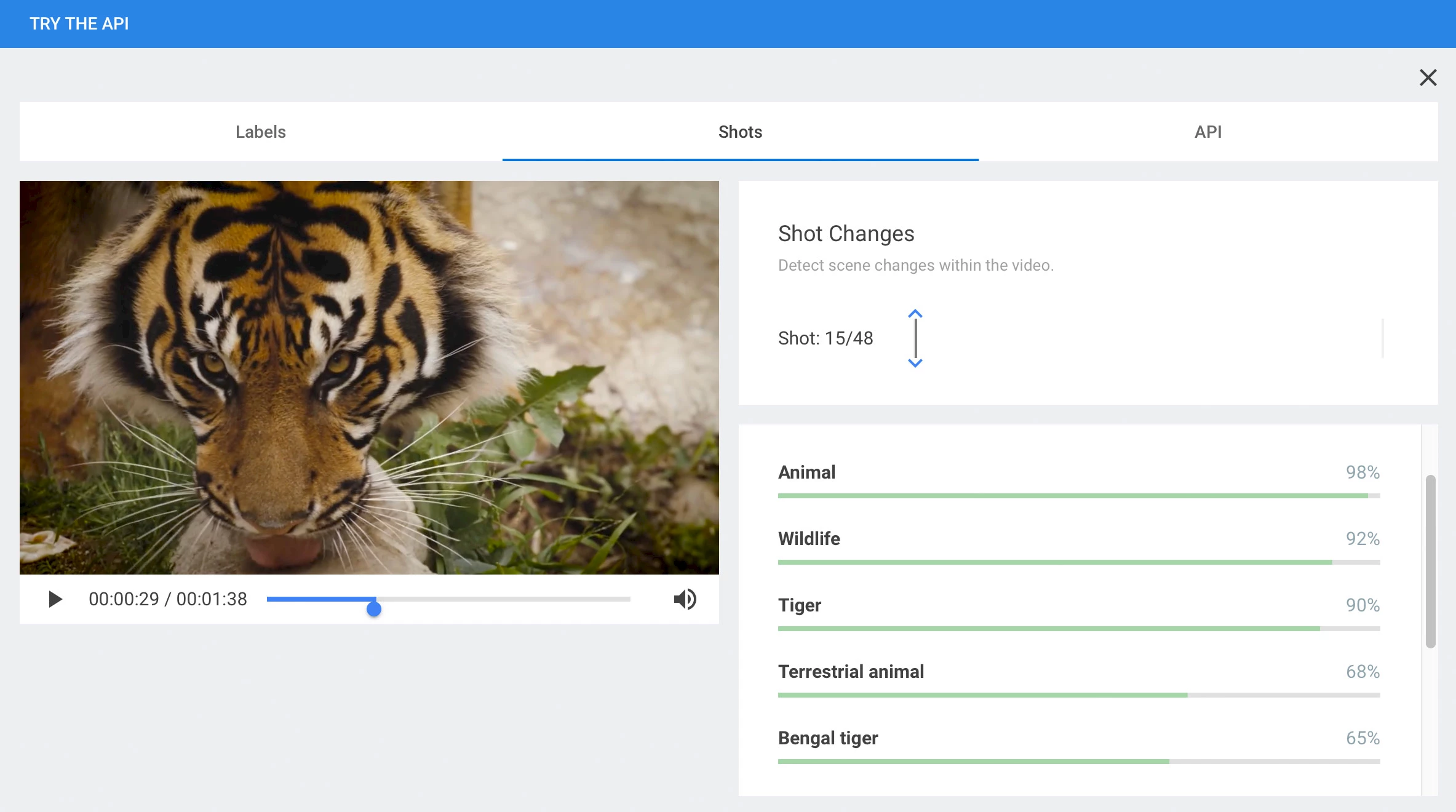

The API seeks to identify specific objects, places, human beings or animals, in any video content. Identified items are catalogued under keywords suggested by the API, each keyword having a certainty score.

For example, say the API is reasonably certain that it has found an animal, probably a tiger, possibly a Bengal tiger. Each deduction has an associated percentage of certainty. And since the analysis is performed by Google’s automatic learning tool, accuracy will improve as the application is exposed to more and more content.

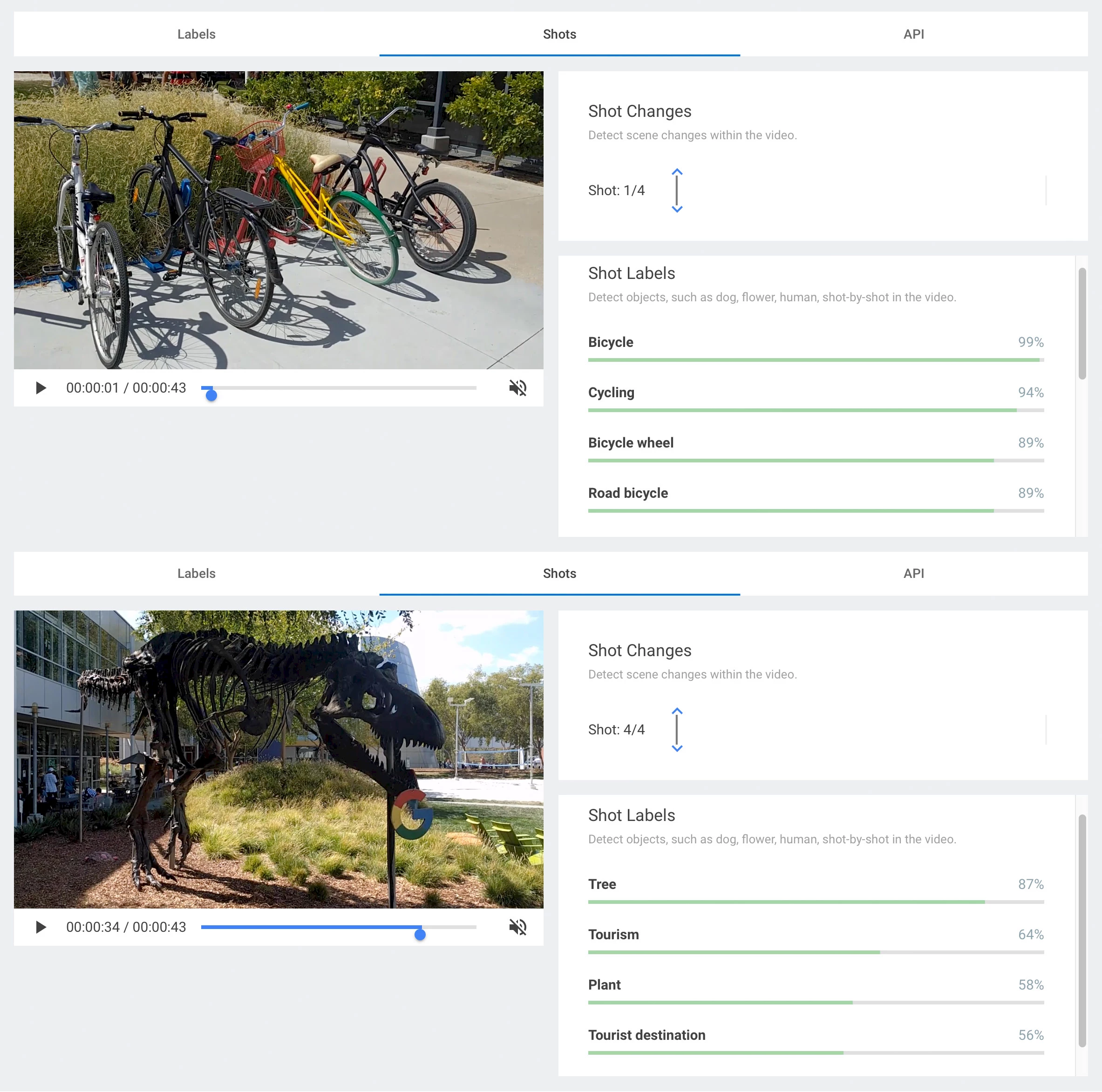

Though the API excels at spotting bicycles, even Google-coloured ones, it has more trouble with Stan, the resident T. Rex on the Mountain View campus…

Early trials are not particularly accurate, but this is normal for Google, whose strategy is to take the field as quickly as possible. Their mantra, after all, is “Launch and Iterate”, i.e. launch first, improve later. Cynics would say their strategy is rather to launch any old buggy, incomplete application as is, saddle customers with the work of finding the bugs, apologize, fix, launch a new version, repeat.

Many potential applications

Media companies that often deal with masses of archived animations will finally be able to catalogue their content automatically, more efficiently, accurately and cheaply. Say a documentary company is looking for clips containing a Ford Model T. The tool can run through an archived database and extract all sequences featuring this particular car, even if only incidentally.

The greatest strength of the API is to apply a layer of contextual analysis thanks to artificial intelligence, enabling the content to be recognized in context. So it’s not just recognizing images in isolation; it’s analyzing a continuum of images, which means that the API picks up scene and frame changes. In future, the system will be able to recognize key scenes in a recording, for example, all shots related to the various tries, free kicks, goals, etc., in a rugby match.

Another potential application would be in the field of live surveillance-camera footage. For example, a system would be able to automatically detect issues and raise the alarm based on predetermined data, such as a particular object moving, a pedestrian falling, an illegally-parked car, etc.

In this era of new media, the survival of traditional media may depend on their ability to mine their archives and find interesting new ways to repackage existing content. Content management could be greatly enhanced thanks to the automation of cataloguing and searching, two processes that are currently highly labour-intensive.