Developing for Microsoft’s HoloLens

This article will discuss a few technical issues we faced while developing the Hololens brain demo that was introduced on this page.Microsoft’s decision to team up with Unity has really made life much simpler for Hololens developers. With a bit of Unity knowledge, you can get going very quickly.

This article will discuss a few technical issues we faced while developing the Hololens brain demo that was introduced on this page.

Microsoft’s decision to team up with Unity has really made life much simpler for Hololens developers. With a bit of Unity knowledge, you can get going very quickly.

However, there are a few surprises in store for newcomers to augmented or virtual reality apps. Many of the issues we faced while creating this demo had to do with the UI, which requires a very different approach from regular Unity apps.

Thanks to the cross-platform nature of Unity, most other capabilities like physics, animation and lighting don’t require specific changes for Hololens.

There are a few steps to actually get your Unity application onto the Hololens device: export the Visual Studio solution from Unity, build the Visual Studio solution, and deploy the resulting package to either the emulator or the actual device.

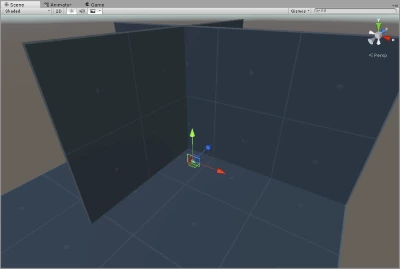

While iterating we tried to do most of the development in Unity. We built a second scene that mimics the final Hololens scene. In the development scene, we were able to quickly fix scripts and animations without wasting time exporting to the device. We used a different camera and input script for the development scene. We used a first-person player controller, a basic environment with planes to simulate a room and a different input script to replace the gaze functionality that is only available on the emulator or the Hololens device.

Simple Environment

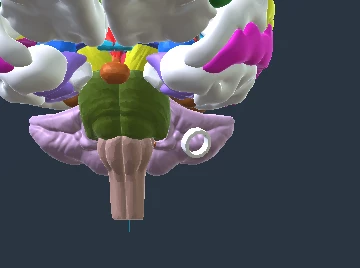

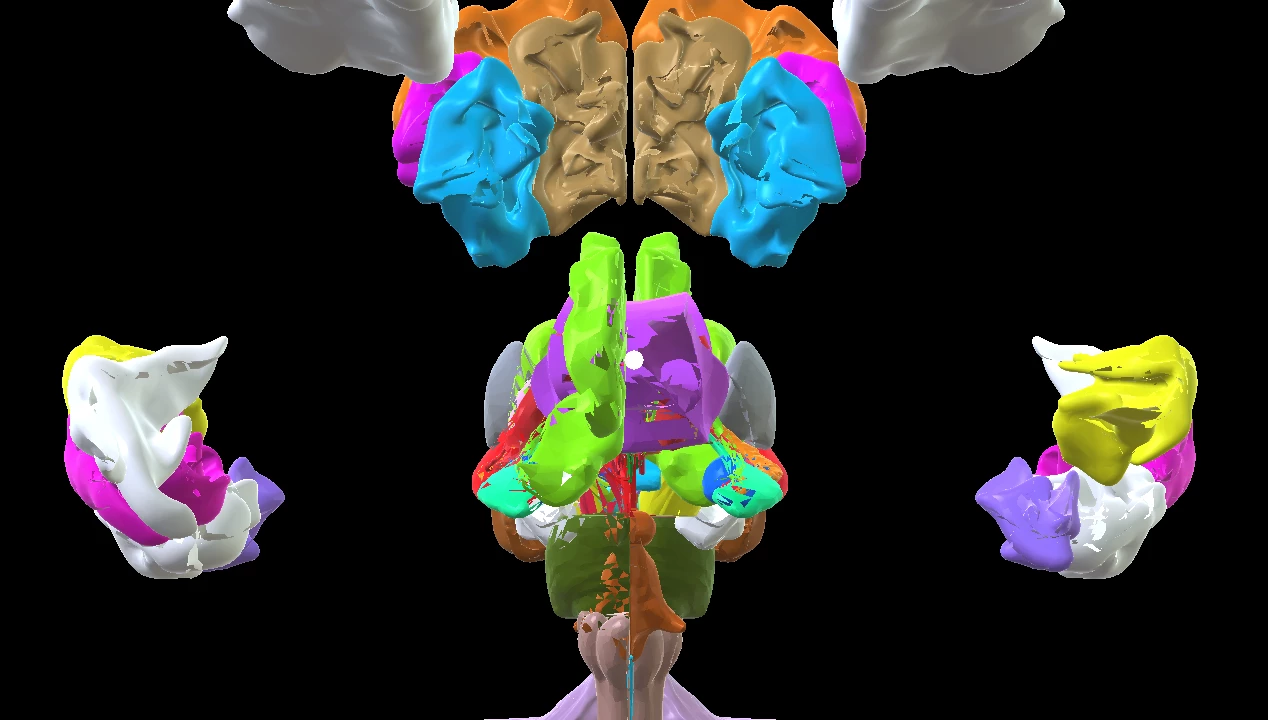

Brain Objects Close-Up

UI Issues

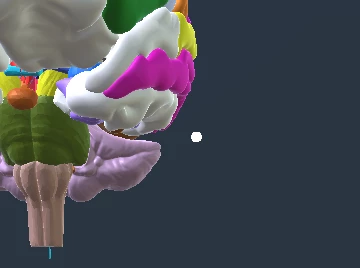

Reticle a.k.a Crosshair

Microsoft provides excellent tutorials for the beginning Hololens developer. In the 101 tutorial they provide scripts for using a special cylindrical cursor which they orient to be perpendicular to the surface aimed by the user.

This could be useful depending on the application type, but for our purposes we wanted a more traditional overlay-style cursor that stays fixed in the centre of the screen.

Dynamic Reticle

Fixed Cursor

All right – piece of cake: “Let’s use a Unity UI image in overlay mode”. Unfortunately, you quickly realize that Unity UI in overlay mode simply does not show up on the device.

Our second attempt was to place a disc facing the user. The disc was added as a child game object of the camera so that it would always stay in front of the user no matter where the user looked.

This is where we hit a basic fundamental difference with virtual and augmented reality apps. The visual effect of this setup is very uncomfortable. It’s like putting a quarter a few inches in front of your face and trying to keep both it and the background in focus at the same time.

It doesn’t work. You need the disc to be far enough to avoid this unpleasant effect.

The next step was to move the disc towards the raycast hit location. The problem was that moving the disc made it shrink. The solution to this was to scale the disc according to its distance from the camera to keep the perceived size as fixed.

var headPosition = Camera.main.transform.position;

var gazeDirection = Camera.main.transform.forward;

RaycastHit hitInfo;

if (Physics.Raycast(headPosition, gazeDirection, out hitInfo))

{

Vector3 hitRay = hitInfo.point - headPosition;

Vector3 headOffset = gazeDirection * distanceToObject;

if (headOffset.sqrMagnitude > hitRay.sqrMagnitude)

{

headOffset = hitRay;

}

transform.position = headPosition + headOffset;

float rayLength = headOffset.magnitude;

transform.localScale = new Vector3(rayLength, rayLength, rayLength);

}

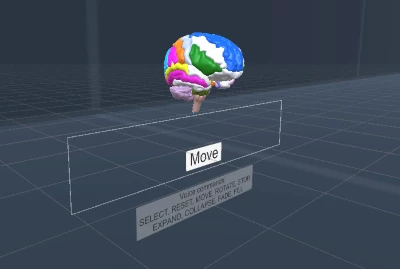

Text Bubbles

The next issue we had to deal with was how to display text bubbles for the user. The same strategy was used because, once again, it would be uncomfortable for the user to have the text overlay right in front of the camera. We used world coordinates for the UI panels and text. The only particularity is that they have a script that implements billboard behaviour, which keeps these game objects facing the user at all times.

These info bubbles point to specific regions of the brain. A simple scaled ramp object from the standard prototype package was flattened and positioned at 90 degrees next to the text panels to create an arrow effect. The origin of the combined game object containing the arrow and the text panels is set at the pointy end and the game object simply gets moved to the brain part’s origin in a script.

Text Bubble

Buttons

Another limitation we found was linked to button interaction, which is usually very easy to do with Unity. Ultimately, we implemented our own focus events to change the move button’s state when the user gazed at it. Microsoft provides a Unity interaction script for UI here that may be helpful if you need scroll bars and/or sliders in your app.

So UI requires a very different approach for virtual reality apps, and after reading a bit about it, I think there are many best practices left to discover in this area. UX designers, the field is yours for the taking!

Rendering

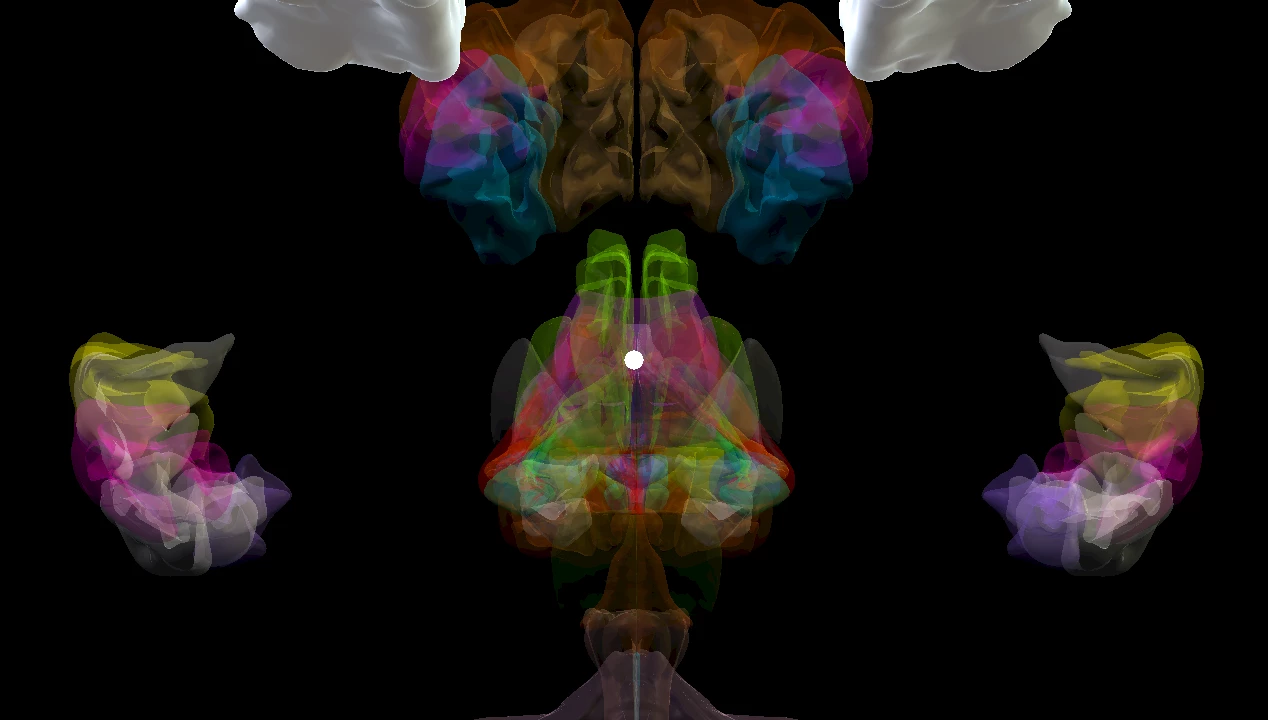

Next up, let’s briefly discuss adding transparency to the model. The problem here was that the transparency mode of the standard Unity shader caused some strange artifacts on the device. This was caused by the overlapping objects of the brain. Here is the general shader transparency mode change script we had tried.

private void EnableFadeMode(Material material)

{

material.SetInt("_SrcBlend", (int)UnityEngine.Rendering.BlendMode.SrcAlpha);

material.SetInt("_DstBlend", (int)UnityEngine.Rendering.BlendMode.OneMinusSrcAlpha);

material.SetInt("_ZWrite", 0);

material.DisableKeyword("_ALPHATEST_ON");

material.EnableKeyword("_ALPHABLEND_ON");

material.DisableKeyword("_ALPHAPREMULTIPLY_ON");

material.renderQueue = 3000;

}

We ended up using the Unity legacy transparent shader and packaging that with the app. Unity settings to do this are well hidden. You must go to Edit -> Project Settings -> Graphics -> Always Included Shaders. When fading the brain parts we dynamically switch the shader of each part’s material to the legacy transparent shader.

Standard Shader

Legacy Transparent Shader

Performance

One thing we struggled with was performance. The brain model we used, which could be split into multiple parts had very highly detailed meshes. We alleviated this problem somewhat by doing a first pass of automatic mesh reduction. We didn’t have time to do a full re-topology, which causes a bit of lag when moving around the brain model. The Hololens is no slouch, but it clearly doesn’t match desktop grade PC graphics cards.

Voice Commands

This is one feature of the demo that was a walk in the park to implement, and that worked right out of the box. You just use the script provided in Tutorial 101, write the text version of the command, and add a handler for the command.

Unity and Visual Studio Tools

Here are a few workarounds for some errors in Visual Studio that we encountered while building the app:

- We had to periodically restart Visual Studio to prevent the DEP0001 deployment error.

- We had a SerializationWeaver tool error. Make sure your Unity project name has no spaces.

Also, make sure to follow all the configuration steps in Tutorial 100. Some steps are missing from Tutorial 101E. The problem we had when configuring a new project was that our application was not getting maximized on start-up.

Links

Here are a few interesting links:

Conclusion

I hope these tips help you clear a few hurdles in this otherwise amazing technology that Microsoft has provided to us.

Happy coding!