Weekly Tech Recap - № 148 - AIY Vision kit, new Android’s cheeseburger, cyborg beetles, Furlexa, etc.

Google solves its cheeseburger controversy

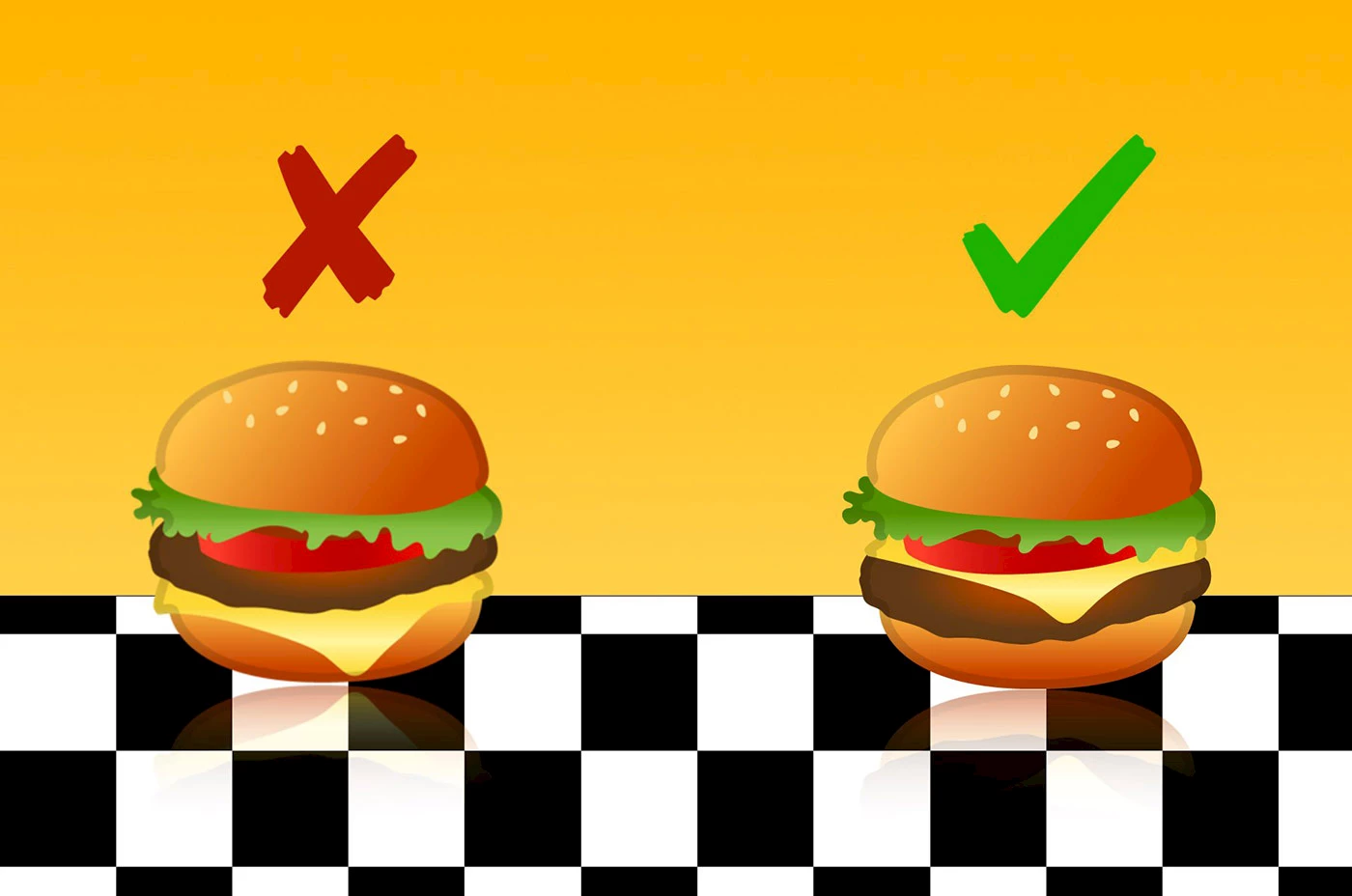

Android’s cheeseburger. © Emojipedia.

Remember last month’s brouhaha about the cheeseburger emoji for Android 8.0, when Google’s designers had the audacity to put the cheese slice under the beef patty? Right away, Google CEO Sundar Pichai was in full damage control mode; Google’s restaurant in Mountain View even temporarily adapted its burger recipe, serving the unorthodox version of the controversial burger. Fast-forward one month, and Android’s version 8.1 has rectified the burger bug. Its designers also used the opportunity to tweak their beer stein emoji.

⇨ Emojipedia, “Google fixes burger emoji.”

New Google AIY kit

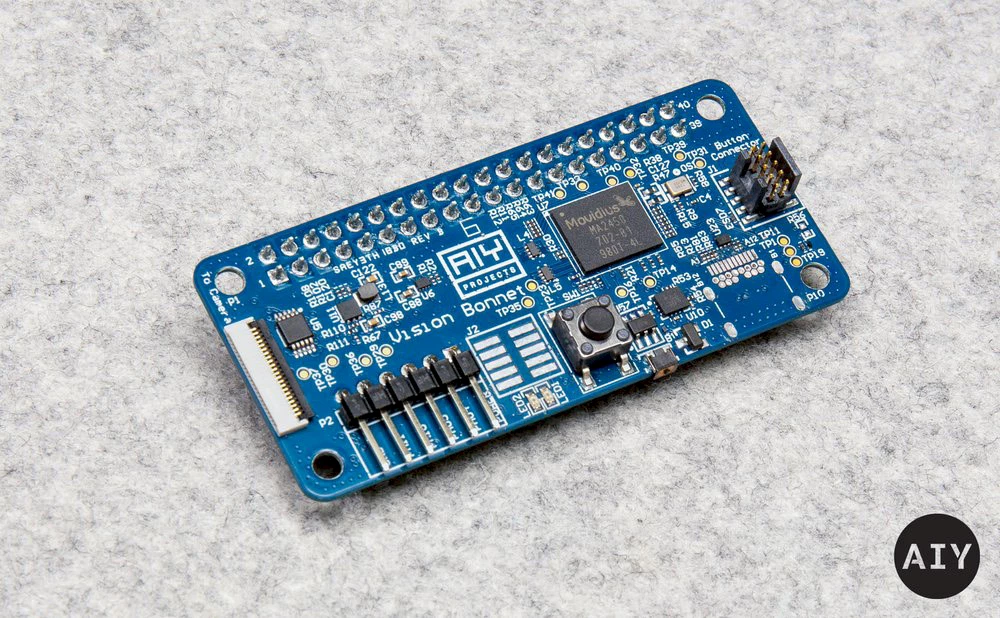

Vision Bonnet board. © Google.

AIY Vision Kit. © Google.

Last May, Google released its first AIY Project kit stemming from its partnership with the Raspberry Pi Foundation, dubbed Voice HAT. Yesterday, Billy Rutledge, Google’s AIY Project Director, showed off Google’s new image recognition kit. The “Vision Kit” is a do-it-yourself build that creates an image recognition device able to see as well as identify objects. It relies on TensorFlow, Google’s open-source tools for automatic learning. In addition to the kit, you’ll need a Raspberry Pi Zero W board, a Camera Module V2, a power source, and an SD card. The main component of the kit is a Vision Bonnet accessory board for Raspberry Pi. The board comes equipped with an Intel Movidius MA2450 processor—a unit for low-power vision processing that can run neural network models. The software includes three neural network models: one that can recognize up to a thousand everyday items, one that can recognize faces and expressions and one that is a “person, cat and dog detector.” Users can even train and retrain their models with TensorFlow. It brings to mind many possible applications, like a device that could recognize your kitty meowing to be let in, and send you a notification. The kit will be available in select stores in early December, retailing for $45 USD.

⇨ Google, The Keyword, “Introducing AIY Vision Kit: Make devices that see.”

Cyborg beetles

Cyborg Zophobas. © Nanyang Technological University.

The field of robotics is constantly trying to imitate creatures from the animal kingdom, with much effort devoted to attempting to authentically imitate movement patterns. An area of robotics that’s less explored (no doubt due to ethical considerations) is cybernetics, or cyborgs, which are hybrids of living beings and machines. This fascinating field is still in its infancy, like recent work in Singapore to develop a robot that’s half insect, half machine. A backpack of electronics was put on the back of a small beetle, which measured 2.5 cm and weighed half a gram. The “backpack” interfaces with the beetle’s antennae, which, when stimulated by an electric current, activate the beetle’s escape impulse by tricking it into believing that it’s on a collision course with something, making it change its direction. Using a charge from only two coin cell batteries, the mini-cyborg can be controlled for up to 8 hours, covering a distance of over one kilometre at an average speed of 4 centimetres per second.

In an interview with Spectrum, Tat Thang Vo Doan, a specialist in the field of cybernetics, lays out a scenario where this type of cyborg is put to use. “For a disaster scenario, we could release hundreds of flying or crawling cyborg insects to the site, since the unit price would be negligible when mass-produced. The insects can move freely themselves into the collapsed structures . . . Once an insect detects a victim, it will send an alarm to the rescue team. I know that it sounds like science fiction, but we are in fact working on it.”

⇨ IEE Spectrum, “Controllable cyborg beetles for swarming search and rescue.”

Fighting smartphone addiction with “Substitute Phones”

Substitute phones. © Klemens Schillinger.

Vienna-based designer Klemens Schillingers has developed a series of five “Substitute Phones” to help people dealing with cellphone addiction. The phone-like objects have the same weight and shape as a smartphone, and contain stone beads that are used to mimic the motion of your hands when you swipe, scroll and zoom on your device.

The physical stimulation provided by the stones may be calming and help with withdrawal symptoms for people who can’t bear to have their hands empty. The designer hopes his devices will help to overcome the compulsive “checking” behaviour, that sees people check their devices for notifications, emails, and texts constantly throughout the day. The body of the phone is made of black polyoxymethylene (POM) plastic, or acetal, and the stones are made from Howlith, a stone that resemble marble. The phones were created as part of a project for an exhibition at Vienna Design Week 2017.

⇨ Dezeen, “Klemens Schillinger’s Substitute Phone aims to help overcome smartphone addiction.”

The “Furlexa”: Amazon Alexa meets Furby in DIY project

Furlexa. © Zach Levine.

A web developer has just posted a video on DIY website howchoo, featuring his creation that’s a hybrid of Amazon’s voice assistant Alexa and Furby, the talking stuffed animal that was once on many children’s Christmas toy lists. He used Raspberry Pi, Amazon’s Alexa Voice Service software, an original Furby and several other components to create the mash-up he calls “Furlexa.” The creature now has a much more extensive vocabulary, an upgraded microphone and speaker, and can answer all the questions you’ve been too embarrassed to ask.

⇨ Cnet, “This Alexa-Furby hybrid exists outside your nightmares.”